Linguistic Regularities in Continuous Space Word Representations

- Slides: 14

Download presentation

Linguistic Regularities in Continuous Space Word Representations Tomas Mikolov , Wen-tau Yih, Geoffrey Zweig Microsoft Research Redmond, WA 98052 Proceedings of NAACL-HLT 2013, pages 746– 751 2014/11/07 陳思澄

Outline � Introduction � Recurrent Neural Language Model � Measuring Linguistic Regularity � The Vector Offset Method � Experimental Results � Conclusion

Introduction � Continuous space language models have recently demonstrated outstanding results across a variety of tasks. � In this paper, we examine the vector-space word representations that are implicitly learned by the inputlayer weights. � We find that these representations are surprisingly good at capturing syntactic and semantic regularities in language, and that each relationship is characterized by a relation-specific vector offset.

Introduction � For example, the male/female relationship is automatically learned, and with the induced vector representations , "King - Man + Woman" results in a vector very close to "Queen. " � We demonstrate that the word vectors capture syntactic regularities by means of syntactic analogy questions and are able to correctly answer almost 40% of the questions. � We demonstrate that the word vectors capture semantic regularities by using the vector offset method to answer Sem. Eval-2012 Task 2 questions.

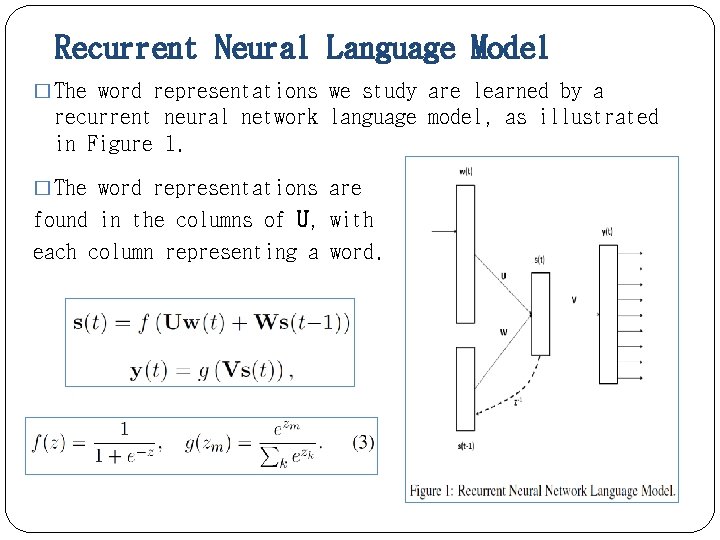

Recurrent Neural Language Model � The word representations we study are learned by a recurrent neural network language model, as illustrated in Figure 1. � The word representations are found in the columns of U, with each column representing a word.

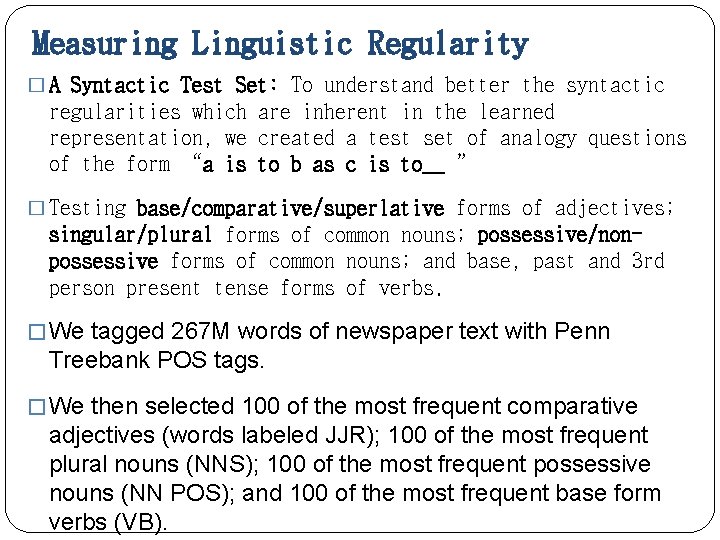

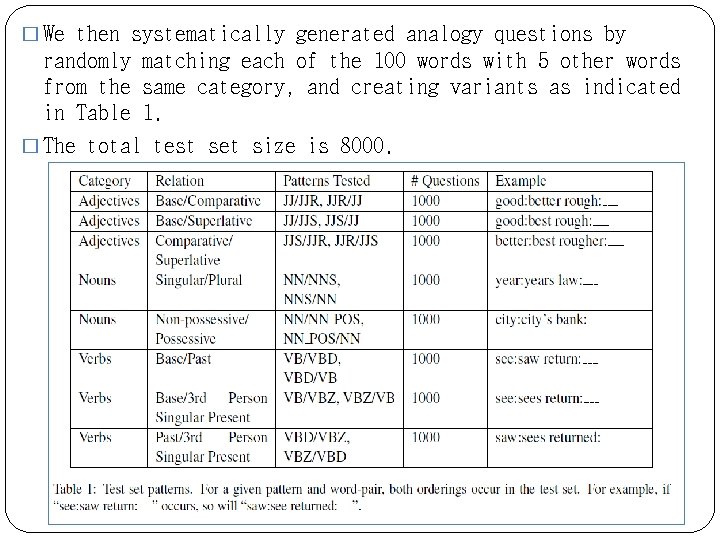

Measuring Linguistic Regularity � A Syntactic Test Set: To understand better the syntactic regularities which are inherent in the learned representation, we created a test set of analogy questions of the form "a is to b as c is to__ " � Testing base/comparative/superlative forms of adjectives; singular/plural forms of common nouns; possessive/nonpossessive forms of common nouns; and base, past and 3 rd person present tense forms of verbs. � We tagged 267 M words of newspaper text with Penn Treebank POS tags. � We then selected 100 of the most frequent comparative adjectives (words labeled JJR); 100 of the most frequent plural nouns (NNS); 100 of the most frequent possessive nouns (NN POS); and 100 of the most frequent base form verbs (VB).

� We then systematically generated analogy questions by randomly matching each of the 100 words with 5 other words from the same category, and creating variants as indicated in Table 1. � The total test set size is 8000.

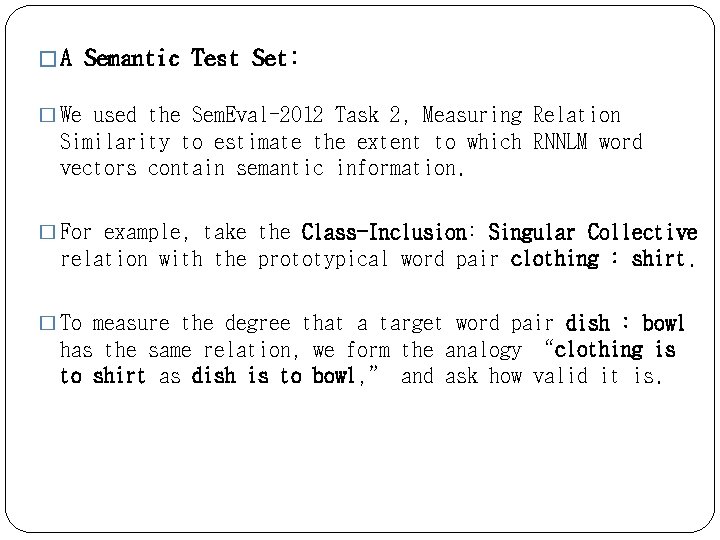

� A Semantic Test Set: � We used the Sem. Eval-2012 Task 2, Measuring Relation Similarity to estimate the extent to which RNNLM word vectors contain semantic information. � For example, take the Class-Inclusion: Singular Collective relation with the prototypical word pair clothing : shirt. � To measure the degree that a target word pair dish : bowl has the same relation, we form the analogy "clothing is to shirt as dish is to bowl, " and ask how valid it is.

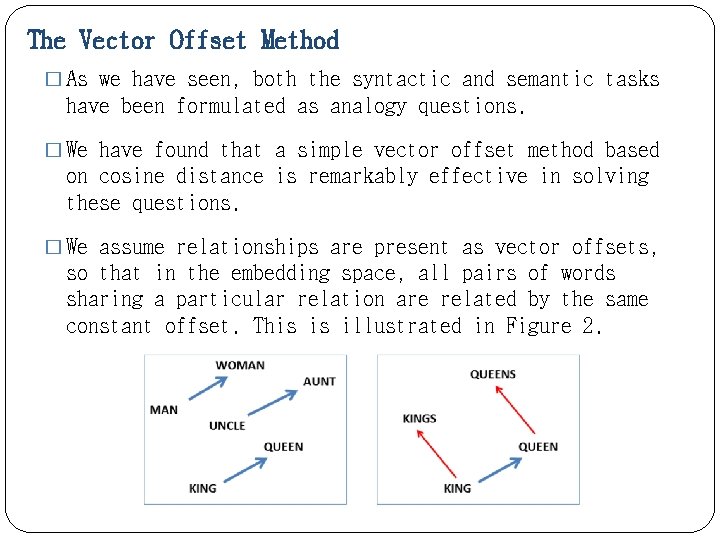

The Vector Offset Method � As we have seen, both the syntactic and semantic tasks have been formulated as analogy questions. � We have found that a simple vector offset method based on cosine distance is remarkably effective in solving these questions. � We assume relationships are present as vector offsets, so that in the embedding space, all pairs of words sharing a particular relation are related by the same constant offset. This is illustrated in Figure 2.

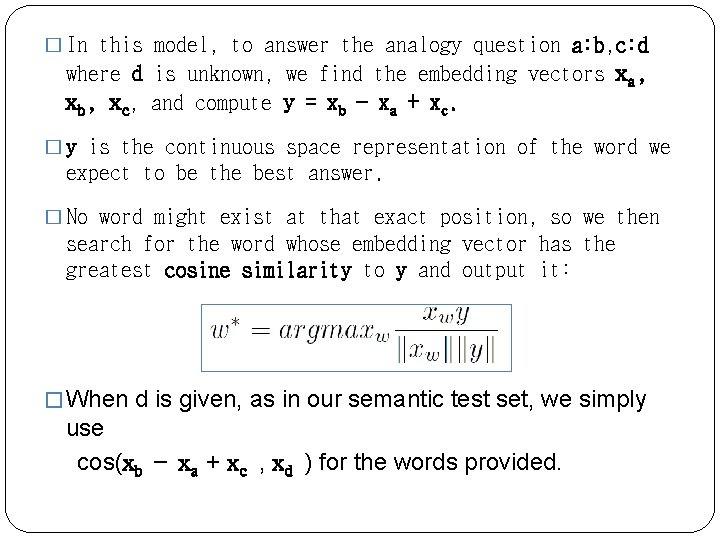

� In this model, to answer the analogy question a: b, c: d where d is unknown, we find the embedding vectors xa, xb, xc, and compute y = xb − xa + xc. � y is the continuous space representation of the word we expect to be the best answer. � No word might exist at that exact position, so we then search for the word whose embedding vector has the greatest cosine similarity to y and output it: � When d is given, as in our semantic test set, we simply use cos(xb − xa + xc , xd ) for the words provided.

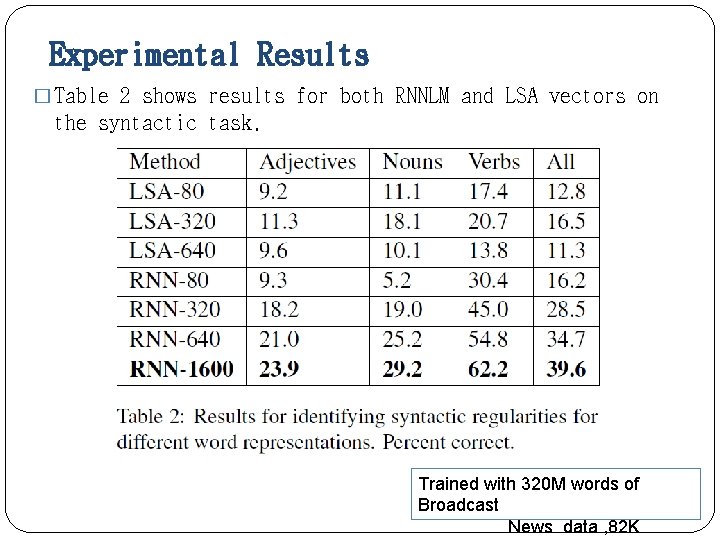

Experimental Results � Table 2 shows results for both RNNLM and LSA vectors on the syntactic task. Trained with 320 M words of Broadcast News data , 82 K

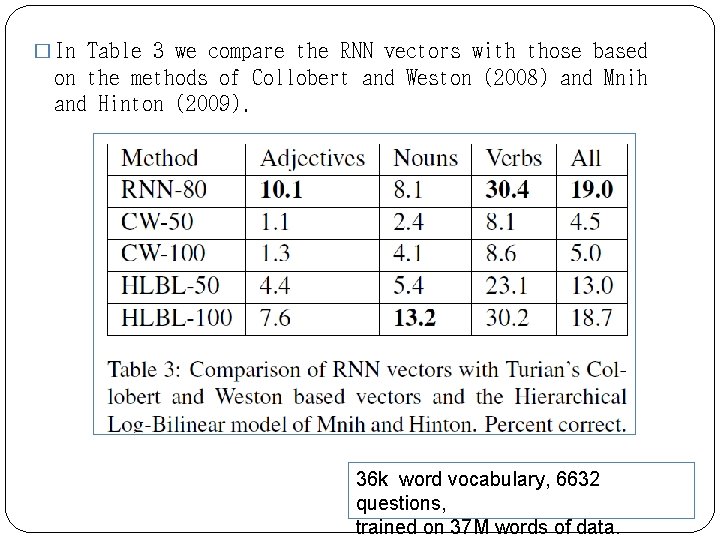

� In Table 3 we compare the RNN vectors with those based on the methods of Collobert and Weston (2008) and Mnih and Hinton (2009). 36 k word vocabulary, 6632 questions, trained on 37 M words of data.

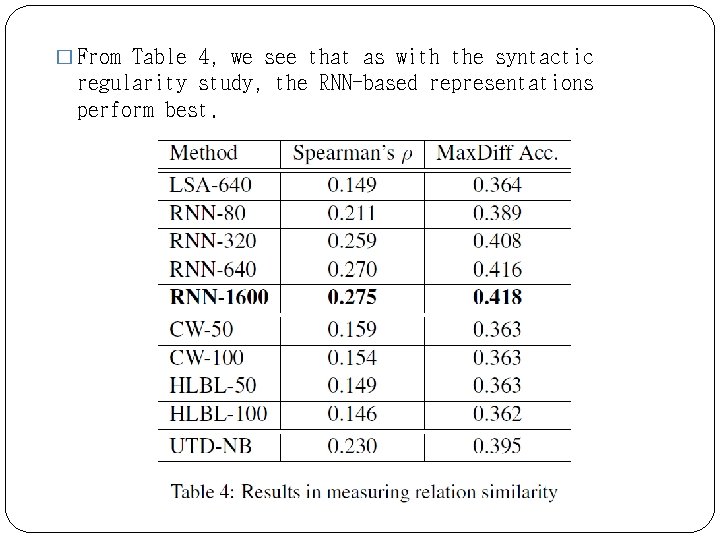

� From Table 4, we see that as with the syntactic regularity study, the RNN-based representations perform best.

Conclusion � We have presented a generally applicable vector offset method for identifying linguistic regularities in continuous space word representations. � We have shown that the word representations learned by a RNNLM do an especially good job in capturing these regularities.

Source: https://slidetodoc.com/linguistic-regularities-in-continuous-space-word-representations-tomas/

0 Response to "Linguistic Regularities in Continuous Space Word Representations"

Postar um comentário